You read the post. You watched the demo. You're convinced. An AI teammate, running 24/7, doing real work. You want one.

Now what?

You open a terminal, install Claude Code, type a message, and get a response. Great. That's a chatbot. You could have done that six months ago. The gap between "I can talk to an AI" and "an AI is doing work while I sleep" is enormous, and almost nobody talks about what fills it.

This is the practical guide. Not the philosophy (that was Part 1), not the security hardening (that's Part 3). This is the middle part. The decisions you'll actually face when you sit down to build this, the trade-offs behind each one, and the mistakes that will cost you days if nobody warns you about them.

I'm drawing from our Chief of Staff Onboarding Guide, a 22-step playbook we built and refined over six weeks of running an AI as a real employee. I won't reproduce all 22 steps here. I'll tell you the story of the decisions that mattered and link the playbook for people who want the exact commands.

Start with Identity, Not Tools

The first instinct is to install software. Resist it. The first thing you should do is register a domain and create a Google Workspace account.

This sounds like overkill. It isn't. Every service your AI will use requires an email address. GitHub needs one. AWS IAM Identity Center needs one. Apple ID needs one for the hardware. Telegram's BotFather is the exception, not the rule. If you use your personal email, you'll spend the next month untangling shared credentials, 2FA prompts landing on your phone for the AI's accounts, and audit trails that mix your actions with the agent's. Get one from Google Workspace, because nearly every tool supports Google SSO. One identity, one login flow, no extra passwords to manage.

A dedicated domain (we used Cloudflare, about $10/year) and a Google Workspace account ($20-25/month) give you something that looks trivial but turns out to be load-bearing: traceability. Every commit, every API call, every PR, every login comes from claw@yourcompany.com. When you're debugging at 2 AM and asking "who changed this?", the answer is unambiguous. Google Workspace also gives you service accounts, document sharing, and organization-level 2FA, all of which you'll need within the first week.

Think of it the way you'd think about onboarding a contractor. You wouldn't give a contractor your personal email and tell them to log into your accounts. You'd create credentials, scope the access, and set up an audit trail. The AI gets the same treatment. Not because it's a person, but because the operational hygiene is identical.

In practice, the setup is simple. Register the domain, set up Google Workspace on it, create the agent account, turn on org-wide 2FA, and use that same company email to create the Apple ID for the Mac mini. We used claw@yourcompany.com. The exact address doesn't matter. The separation does.

One gotcha: Cloudflare domains require delegating nameservers to Cloudflare, which means you can't use another DNS provider for that domain. If you need split DNS, pick a registrar like Namecheap that allows custom nameservers. We didn't need split DNS, and it was fine, but it's worth knowing upfront.

Dedicated Hardware Changes the Game

You have three choices for where the agent runs: your laptop, a cloud VM, or dedicated hardware. I skipped the first, tried the second, and landed on the third. Here's why.

Running on your laptop was never an option for me. I don't want my identity shared with another tool.

I actually set one up on EC2. It ran into problems fast. Identity was the big one. On AWS you're stuck with IAM roles for access scoping, which is fine for AWS services, but the agent had no real browser. Anything that could only be accessed through a browser (SSO portals, web-based tools, OAuth flows) was a dead end. Troubleshooting was painful. When something broke, I was SSH'd into a headless Linux box trying to debug a browser automation issue I couldn't see. On a Mac mini, I can screen share in and watch what's happening. And the more you watch how the agent works, the more you actually understand what's going on under the hood. That matters. Learning how to work with an agent is going to be the single most important skill in this industry.

A Mac mini costs what a cloud Mac instance costs for two months. It sits in your office. It runs 24/7. It has its own Apple ID, its own disk encryption, its own firewall. When something goes wrong, you can walk over to it. When something goes right, you don't have to think about it at all.

Treat the machine the same way you'd treat any company-owned workstation. Sign in with the agent's Apple ID, run macOS updates, keep it awake, turn on the firewall, disable services you don't need like Screen Sharing and Remote Login, and enable FileVault. It takes about an hour and saves you from a lot of avoidable weirdness later.

The agent now has a machine that's always on, always reachable over Tailscale, and completely isolated from your personal environment.

The isolation is the point. Not just for security, though that matters. For clarity. The agent's machine is its workspace. Your machine is yours. There is no ambiguity about what's running where, whose credentials are whose, or which process belongs to whom.

The Access Model: Least Privilege for an AI

On its own Mac mini, the agent has full root access. It can install anything it needs to do its job. Homebrew packages, CLI tools, browser extensions, whatever. Yes, that's a security risk. I accept it willingly because I have other layers of security in place (more on that in Part 3). I don't want to babysit every brew install. The agent needs to be self-sufficient on its own machine.

But outside its machine, least privilege applies. Our access model has three tiers, and they exist for different reasons.

AWS access is read-only. The agent authenticates through IAM Identity Center SSO with a Developer permission set that gives it read access to most services plus SSM Session Manager for instances. It cannot create resources. It cannot modify infrastructure. It cannot run terraform apply. If it needs infrastructure changes, it writes the Terraform code, opens a PR, and waits for a human to approve and apply. This has saved us twice already. Once when the agent wrote a valid but expensive EC2 configuration, and once when it proposed a security group change that would have opened a port we wanted closed.

The AWS implementation is straightforward. Add the agent in IAM Identity Center with 2FA, give it a read-heavy developer permission set, let it use SSM, and keep destructive infrastructure access out of reach. On the Mac mini, install the AWS CLI and configure SSO. Sessions expire daily, which is good. The agent can re-auth through browser automation, but deploys still go through GitHub Actions with OIDC.

GitHub access uses a separate account with the agent's company email. For code repositories, the agent works through pull requests. It cannot merge its own PRs on code repos. For one exception, its wiki repository, it has direct push access. The wiki is where it stores research, meeting notes, playbooks, and working documents. Requiring PRs for every wiki edit would add friction without meaningful safety benefit.

GitHub follows the same pattern. Create a separate account with the agent's company email, turn on 2FA, add it to the org, generate an SSH key, authenticate with gh, and set a distinct git identity. When the agent opens a PR or pushes to the wiki, the audit trail should be obvious at a glance.

The principle is simple. For anything expensive or hard to reverse (infrastructure, production code, cloud resources), the agent proposes and a human approves. For anything cheap and easy to reverse (wiki pages, daily notes, research documents), the agent has autonomy. You're not trying to prevent the agent from doing things. You're trying to make sure the expensive things get a second look.

This same principle extends to API keys. The agent has its own Anthropic key, its own Brave Search key, its own Google Gemini key. Not shared keys. Not your keys. Its own. When you rotate a key, you know exactly which system is affected. When a key leaks, you know exactly which key to revoke.

Installing the Agent

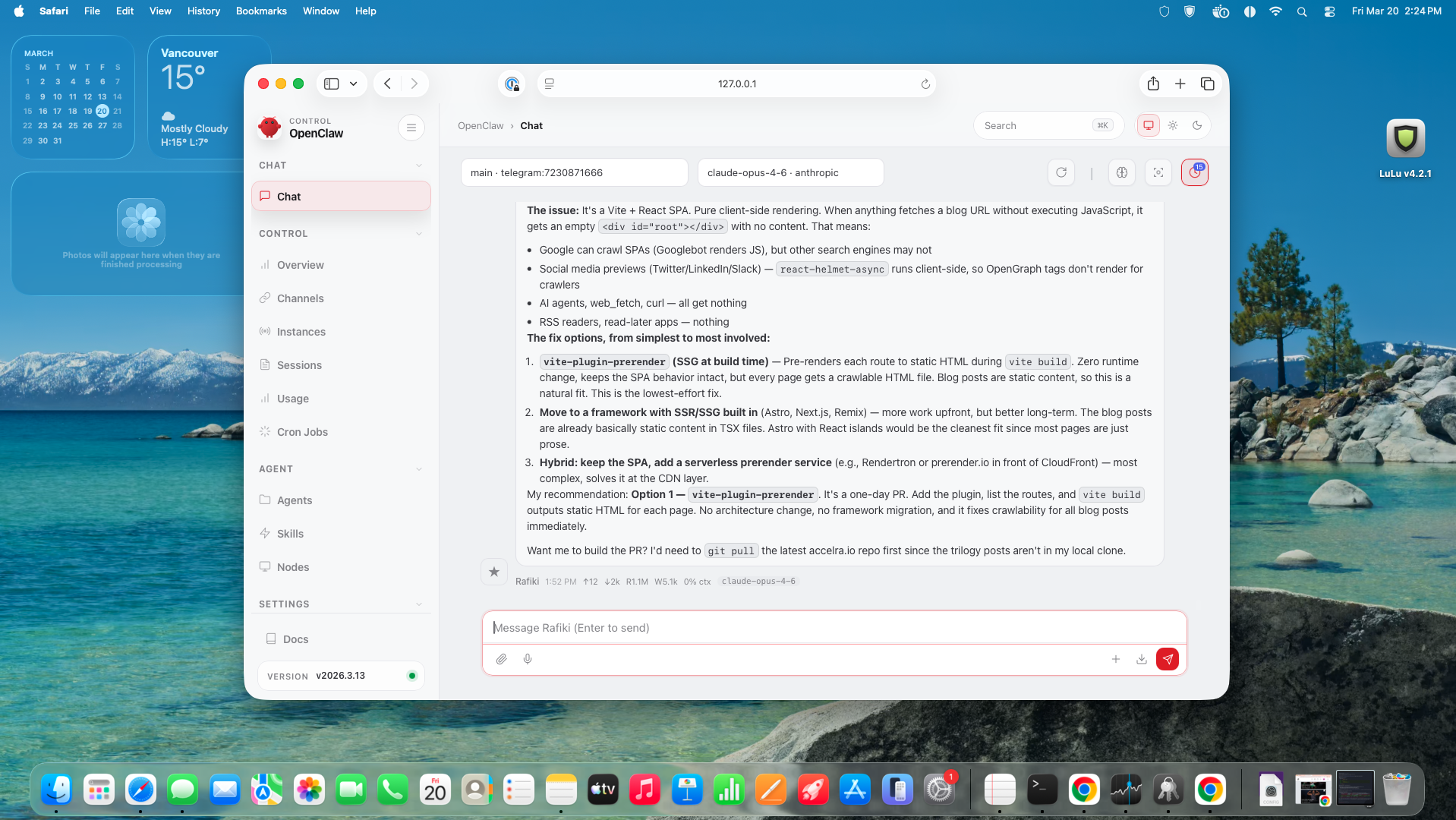

The install itself is three parallel tracks. First, get the agent its own API keys: Brave Search, Gemini for memory search, and an Anthropic setup token generated from your Claude subscription. Second, create the Telegram bot through BotFather and save the token because that's the cleanest direct communication channel. Third, install OpenClaw and onboard it in Advanced mode.

One important caveat, because this matters later in the security model: don't blindly run remote install scripts. If you're going to use the OpenClaw installer, read it first, make sure you trust it, then run it. That's different from pasting random GitHub setup commands into a shell and hoping for the best. Once it's installed, run openclaw onboard, configure the gateway with loopback binding and token auth, add the Telegram token, install it as a LaunchAgent so it survives reboots, and pair Telegram with openclaw pair telegram.

Message your bot. If it responds, you're live. If it doesn't, this is where having Claude Code on the same machine pays off. Create a ~/workspace/code/troubleshooting/ folder, run openclaw doctor, paste the output, and let Claude Code diagnose it. You're using AI to troubleshoot AI. From here on, every OpenClaw problem follows the same pattern: run the diagnostic, drop it in the troubleshooting folder, ask Claude Code.

One more thing: create a dedicated wiki repo in your GitHub org and give the agent full access. This is its shared knowledge base for research, decisions, playbooks, and project docs. Allow direct push to main on this repo only. Speed matters more than review for a wiki. All other repos go through PRs.

Personality Files: The Part Nobody Expects to Matter

When someone hears "personality files," they think you're anthropomorphizing a tool. Fair reaction. I thought the same thing until I watched the effect compound over weeks.

The agent's workspace contains a set of files that load into every conversation. AGENTS.md is the operating manual: rules, conventions, permissions, escalation paths. SOUL.md is the agent's self-model: what it's learned about itself and the work. USER.md is a profile of the human: who you are, how you work, your preferences, your current focus. MEMORY.md is curated long-term memory: active projects, team context, technical decisions. IDENTITY.md defines who the agent is and how it communicates. TOOLS.md catalogs what tools are available and how to use them. HEARTBEAT.md tracks the agent's operational state.

In week one, these files are sparse. AGENTS.md says "use PRs for code repos" and "escalate before deploying." SOUL.md is mostly empty. USER.md has your name and timezone. MEMORY.md lists your current projects and nothing else.

By week four, they're dense. SOUL.md contains genuine operational wisdom: "After 2-3 failed attempts at the same class of approach, stop and consult other models immediately." That's a pattern the agent formalized after burning six attempts on a CSS problem before multi-model escalation broke it in one round. USER.md has evolved from a name and timezone into a rich profile: your engineering philosophy, communication preferences, how you make decisions, what you're working on right now. MEMORY.md tracks not just what projects exist but what decisions were made, why, and what the alternatives were. AGENTS.md has grown a "Regressions" section, a running list of mistakes the agent made and the rules that prevent them from recurring.

The compounding is what makes this work. A new session starts with zero context. But it reads these files, and within seconds it knows your conventions, your preferences, your active projects, your past mistakes, and the principles you've established together. Two identical OpenClaw installations with different workspace files behave like completely different agents.

This is also why you should invest time in these files from the start. Don't wait for the agent to figure out your preferences through trial and error. Seed AGENTS.md with your conventions on day one. Write USER.md with how you work and what you care about. Add to SOUL.md when the agent gets something right or wrong. Curate MEMORY.md weekly. The return on this investment increases every single day.

But these files don't update themselves. OpenClaw loads them every session, but keeping them current is on you. We run a weekly cron job — the agent reads its recent memory, updates SOUL.md with new self-learnings, and refreshes USER.md with any changes to how we work together, what's in focus, or what's shifted.

openclaw cron add \

--name "Weekly reflection" \

--cron "0 10 * * 0" \

--tz "America/Vancouver" \

--session isolated \

--message "Read SOUL.md and USER.md. Review this week's memory files. \

Update both with new learnings and changes. Keep them concise and current."Without this, the files stay frozen at whatever you seeded on day one. The compounding stops. A weekly reflection is the minimum — it's the difference between an agent that learns and one that just remembers its first boot.

Back Up Your Workspace

Your agent's workspace — SOUL.md, USER.md, MEMORY.md, daily notes, everything — is just files on disk. If the machine dies, it's all gone. A nightly backup is cheap insurance.

If you don't use git, the simplest option is to point your workspace at a cloud-synced folder — iCloud Drive, Dropbox, or Google Drive. Change the workspace path in your OpenClaw config to a folder inside your sync directory and your files back up automatically. No cron needed. The trade-off is you lose version history, but you get backup with zero setup.

If you do use git, initialize the workspace as a repo and point it at a private remote:

cd ~/.openclaw/workspace

git init

git remote add origin git@github.com:you/my-agent-workspace.git

git add -A && git commit -m "initial workspace" && git push -u origin main⚠️ Use a private repo

Your workspace contains your agent's memory, preferences, and operational history. Make sure the GitHub repo is set to private.

Then add a cron job to back it up nightly. There's no external script — the --message flag is the instruction. OpenClaw spins up an agent session, hands it this message, and the agent runs the git commands directly:

openclaw cron add \

--name "workspace-git-backup" \

--cron "0 23 * * *" \

--tz "America/Vancouver" \

--session isolated \

--model "google/gemini-2.5-flash" \

--light-context \

--no-deliver \

--message "Run a workspace git backup: cd ~/.openclaw/workspace \

&& git add -A && git diff --cached --quiet \

|| (git commit -m 'auto-backup $(date +%Y-%m-%d)' && git push). \

Report briefly if anything was backed up or if it was clean."This runs at 11 PM, stages everything, and pushes if there are changes. If nothing changed, it does nothing. The --light-context flag skips loading the full workspace files into the session — no reason to burn tokens on SOUL.md and MEMORY.md when all the agent is doing is running git commands. --no-deliver keeps it silent. It's a safety net: even if you forget to commit during the day, nothing is lost.

Memory Architecture: Why Rules Fail and Filesystems Don't

The biggest mistake you can make with agent memory is treating it as a single file in a single location. We made this mistake. The risk was obvious as soon as we traced how context actually flowed, so we fixed it before it turned into an actual leak.

The problem: workspace files get injected into every session. Every cron job, every sub-agent, every group chat thread. If your memory file contains personal information, deal terms, health data, or anything sensitive, that information is now available in contexts where it has no business being. Add the possibility of prompt injection, where a malicious input tricks the agent into revealing its context, and you have a real data exposure risk.

Our first fix was rules. "Don't share private information in group chats." This is the software equivalent of a sign that says "please don't steal." It works until it doesn't, and when it doesn't, you've already leaked the data.

The fix that actually works is filesystem separation. Three tiers of memory, each with different access boundaries.

Tier one is the workspace. MEMORY.md lives here and contains only work context. Projects, technical decisions, team information. Nothing personal. USER.md lives here too — it contains how you work, your preferences, your current focus — but nothing private. For anything sensitive, USER.md includes a pointer: "Family and personal details are in ~/.openclaw/private/memory-private.md — loaded only in direct Telegram sessions." The pointer tells the agent where to look without putting the data in a file that every session can see. These workspace files are in git, backed up daily, and available to every session including sub-agents and cron jobs. That's fine because they contain nothing that would be damaging if leaked.

Tier two is daily notes. These live in a structured directory and contain the day-to-day record of what happened. Conversations, decisions, tasks completed. They roll over at midnight. They're searchable by the agent but not loaded into every session by default.

Tier three is private memory. This lives in a directory outside the workspace entirely. Not in git, not indexed by search, not reachable from sub-agents because they inherit the workspace directory, not this one. Personal information, sensitive business details, anything that should only be accessible in a direct one-on-one session lives here.

The concrete setup here is to create the private directory outside the workspace, keep explicit rules in AGENTS.md for when private memory can be loaded, let the agent write daily notes to dated files, and periodically distill the durable lessons back into MEMORY.md. The key decision is not the folder name. It's the separation boundary.

The architecture enforces what rules cannot. A sub-agent spawned from a cron job literally cannot read tier-three memory because the file isn't in its working directory or any parent directory it can traverse. You don't need a rule saying "don't read private files in cron jobs." The filesystem makes it impossible.

After implementing this, we ran attack simulations against our own design and found five gaps. Prompt injection paths through sub-agent working directory inheritance. Git history containing pre-separation data (we had to purge it). Environment variable leakage between tiers. Fix the architecture first. Bolt-on rules are not a substitute.

Secrets Management: 1Password for Sharing, Keychain for Runtime

At some point you'll need the agent to have API keys, tokens, and credentials. The obvious approach is to paste them into chat or drop them into a .env file. Both are wrong, and here's why it's worse than you think for an AI agent specifically.

A human developer knows not to leave secrets lying around in a chat window or plaintext file. An AI agent doesn't have that instinct. If a secret is in a place the agent can read casually, it can end up in a conversation context, a log file, a PR description, or a Telegram message. You're one bad prompt away from your API key appearing in plaintext somewhere it shouldn't.

The pattern that worked for us is simple. Use 1Password to share credentials during setup, especially when you need to hand something directly into the local OpenClaw chat window on the agent machine. Use a shared Apple Note for the checklist and coordination, not for storing the secrets themselves. Then store runtime secrets in macOS Keychain, pull them at process start, and launch the gateway through a wrapper script instead of hardcoding anything into config.

That gives you a clean separation. 1Password is for secure handoff. Keychain is for local runtime storage. The secrets exist only as ephemeral environment variables in the process tree that needs them. They're never written to disk in plaintext. They're never committed to git. They're never sitting in a config file or chat transcript.

One lesson learned the hard way: environment variable inheritance is an attack surface you don't think about. We had ANTHROPIC_API_KEY sitting in the parent shell environment, and a child process that should have used OAuth inherited it and preferred the API key instead. The fix is to keep secrets out of the parent environment and load them only in the specific process that needs them.

If you're not on macOS, the equivalent pattern works with any keyring or secrets manager. The principle is the same: secrets encrypted at rest, loaded ephemerally at runtime, never in plaintext config files, and scoped to the narrowest possible process tree.

Making It Useful 24/7, Not Just Running 24/7

An agent running on dedicated hardware 24/7 is worthless if it just sits there waiting for messages. The difference between an always-on chatbot and an always-on teammate is what happens when nobody is talking to it.

Cron jobs are the backbone. A morning brief fires at 5 AM. It pulls your calendar, checks pending tasks, reviews yesterday's work, and delivers a summary to Telegram before you're awake. A workspace backup runs at 11 PM, committing the current state to a private git repo so you can recover from disaster. A memory check runs at 11 PM, scanning for anything the agent committed to during the day that wasn't completed. A daily note rollover fires at midnight, archiving today's notes and creating a fresh file for tomorrow.

The heartbeat runs every 30 minutes and answers one question: is anything stuck? It checks for stale tasks, failed cron jobs, urgent messages, and infrastructure alerts. If everything is fine, it stays quiet. If something needs attention, it reaches out. Getting the sensitivity right took three weeks. Too aggressive and you get alerts on everything. Too passive and you miss real issues. The balance we found: proactive on monitoring, ask-first on outbound actions.

The cron jobs and heartbeat are also where you discover configuration footguns. OpenClaw uses strict Zod schemas for its config files, so inventing keys that don't exist in the schema will crash the gateway. We learned this when the agent confidently modified its own config file, added a key it hallucinated, and brought the whole system down. The fix was a regression rule: always read the documentation before modifying config files. And for gateway configuration specifically, you can send a kill -USR1 signal to hot-reload config without restarting the process, which saves you from a cold restart when you're just tweaking cron schedules.

Write Before You Execute

This is the single most important operational pattern we discovered, and we discovered it the hard way.

The agent said "I'll build that video montage for the marketing campaign" and started working on it. The session timed out before it finished. The agent woke up in a new session with no memory of the promise it had made. The task evaporated. Nobody knew it was supposed to happen. Nobody noticed it didn't.

The fix: before executing any multi-step task, write the task to a file. What you're doing, why, what the expected outcome is, and when it should be done. The heartbeat checks this file every 30 minutes. If a task has been open for longer than expected with no progress, it gets flagged. If a session dies mid-task, the next session sees the task file, understands what was in progress, and can pick it up or escalate.

This sounds like bureaucracy. It's the opposite. Without it, the agent makes promises that die with each session. You lose track of what was committed to, what's in progress, and what fell through the cracks. With it, you have a persistent record of intent that survives session boundaries.

The pattern extends to any commitment the agent makes. If it tells a user "I'll look into that," the commitment gets written down. If it identifies an issue during a cron job, the issue gets written down. The principle is simple: if it matters enough to say, it matters enough to write down.

What to Actually Expect

People ask me how long this takes, and the honest answer has three parts.

The hardware and identity setup takes a day. Domain registration, Google Workspace, Mac mini configuration, GitHub account, API keys, Telegram bot, basic agent installation. If you have the playbook in front of you, you can do this in a Saturday. Most of the time is waiting for DNS propagation and account verification.

Getting the personality right takes a week. The first few days are calibration. The agent will do things you don't like, in ways you didn't expect. It will push to main when you wanted a PR. It will write verbose messages when you wanted concise. It will ask permission for things it should just do, and act autonomously on things it should ask about. Every correction goes into AGENTS.md. By end of week one, the corrections slow down. By end of week two, they're rare.

Trust takes a month. Not because the agent isn't capable, but because you need to see it handle edge cases. What does it do when a cron job fails? When an API returns garbage? When it's genuinely stuck and needs to escalate? When it's wrong and you push back? You can't shortcut this. You have to watch it work, correct it, and watch it internalize the corrections. After about four weeks, you stop checking the agent's work by default and start checking it by exception.

The first week will feel like the agent is creating more work than it's saving. That's normal. You're building the foundations: the personality files, the access model, the memory architecture, the conventions. By week three, the investment starts paying off. By week four, you're planning work for the agent instead of doing it yourself.

What Comes Next

Everything in this post assumes one thing: you trust the setup enough to give the agent real access to real systems. But access without security hardening is reckless. The agent has API keys, cloud credentials, a browser with active sessions, and a machine that's always on. That's a meaningful attack surface.

Part 3 of this series covers the security model. Firewall configuration, outbound traffic monitoring, DNS logging, environment variable isolation, prompt injection defense, and the architectural principle that makes it all work: security controls should be structural, not behavioral. Rules get forgotten between sessions. Filesystem permissions don't.

If you've read this far, you have enough to build the teammate. The real question is whether you'll do the boring structural work up front or wait for the first failure to force you into it. Part 3 is the security model that decides which version of that story you get.

The AI Teammate Trilogy

Part 1: I Gave an AI Its Own Laptop and Made It Employee #1

Part 2: How to Build Your Own AI Teammate (you are here)

Part 3: Your AI Has Root Access. Now What?

Sanjeev Nithyanandam is the founder of Accelra Technologies, a consultancy in Vancouver. If you're thinking about agentic engineering for your team, reach out.