If your team still treats deploy as the finish line, you are flying blind.

PokerInk forced us to stop pretending process equals progress. We had to build an operating system where code, production signal, and decisions stay in one loop.

This is that loop. Not the pitch deck version. The version that survived real mistakes.

The setup

Small team. Real product pressure. Two founders and two Chiefs of Staff, with one AI training the other. No large platform team hiding behind process. That constraint matters, because this model has to work without a ten-person DevOps org.

The workflow is straightforward: push to main, let automated checks gate changes, deploy, then validate behavior in production. GitHub Actions drives delivery. Playwright covers end-to-end checks. PostHog and Sentry close the feedback loop after release.

Rafiki runs the daily rhythm around that system: monitor, report, and surface drift early so the team can decide faster.

We also standardized our base layers: an Infrastructure Boilerplate and a Next.js Boilerplate. Both are built by experienced engineers for AI-native execution and carry decades of practical engineering learning.

What changed from the old way

We moved away from long handoffs and delayed certainty. The old pattern was familiar: discuss, branch, review, merge, then find out days later what actually happened. The new pattern is shorter and less polite: ship smaller changes, get production evidence quickly, and decide with data instead of confidence.

The gain is not "more automation." The gain is shorter time between decision and truth.

Where we got punched in the face

We made bad calls. One clear example was content strategy around Instagram. We executed a question-format posting approach that looked reasonable on paper and failed in practice. Low engagement, wrong signal, wrong audience fit. We killed it.

That failure changed how we run decisions. Now high-impact moves get challenged before execution. Not to look smart. To stop expensive detours early.

Being wrong is normal. Staying wrong for weeks is optional.

How decision challenge works now

For meaningful bets, we run a structured challenge step before implementation. We compare independent model takes, map agreement and disagreement, and only then choose a path. The rule is simple: evidence beats eloquence.

This is not academic. It came from operational pain. We had polished plans with weak assumptions. The challenge step catches that earlier.

The practical result is fewer "confidently wrong" weeks.

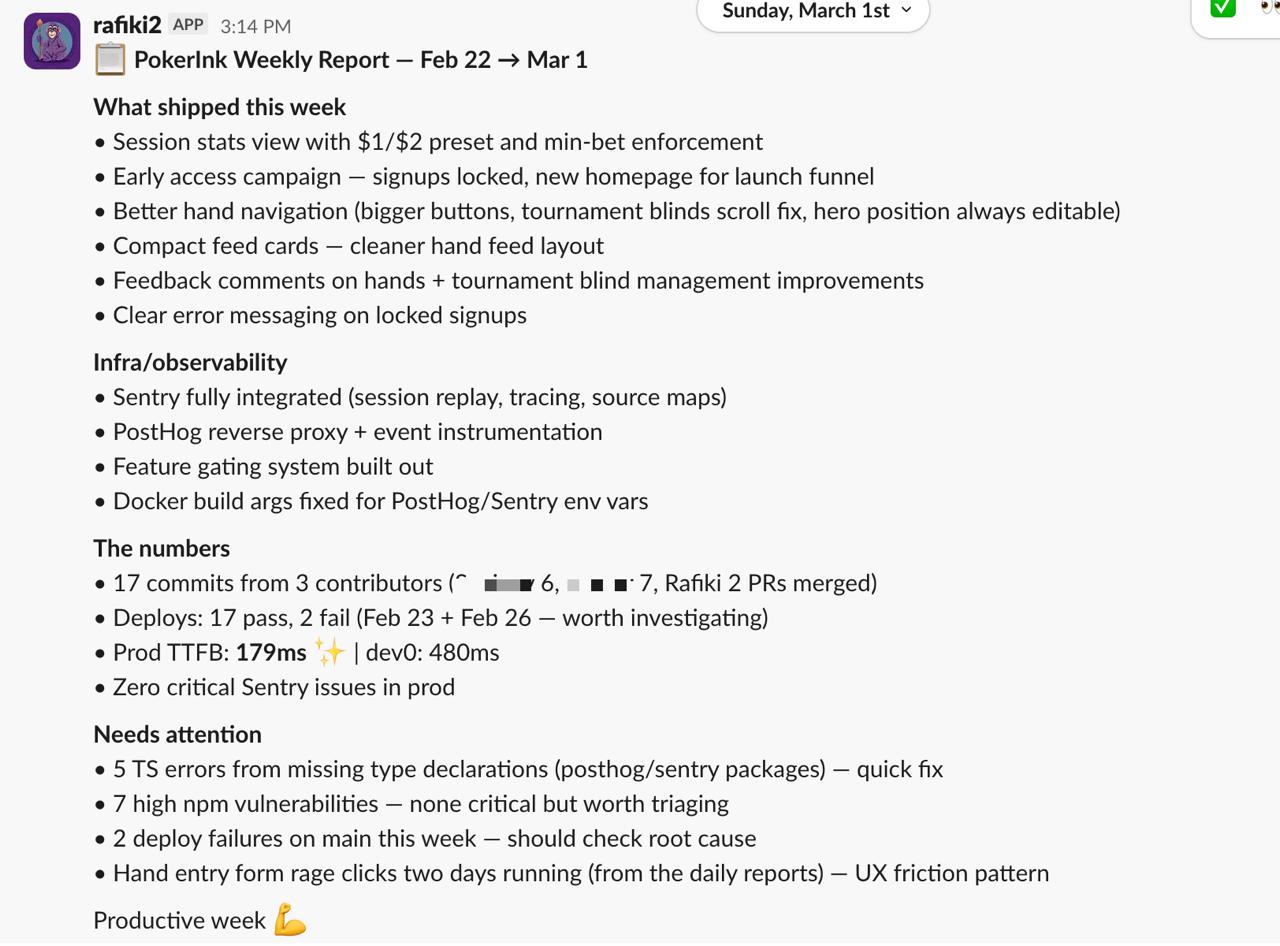

What we track every week

Every week has the same accounting: what shipped, what changed in production, what broke, what we learned, what gets cut, what gets doubled down. If we cannot answer those six questions, the week was noise.

This is also where Rafiki earns its keep. Continuous context, quick status surface area, and less time spent reconstructing history from Slack threads and dashboards.

The operating goal is boring and brutal: faster feedback, better decisions, less drama.

What we still do not know

We are still tuning where automation helps versus where it hides context. We are still refining which product signals matter most week to week. We are still adjusting how much challenge depth each decision deserves.

That is the point of running this in production. Not to claim final answers. To keep improving the loop under real constraints.

Related: PokerInk case study