The Thesis

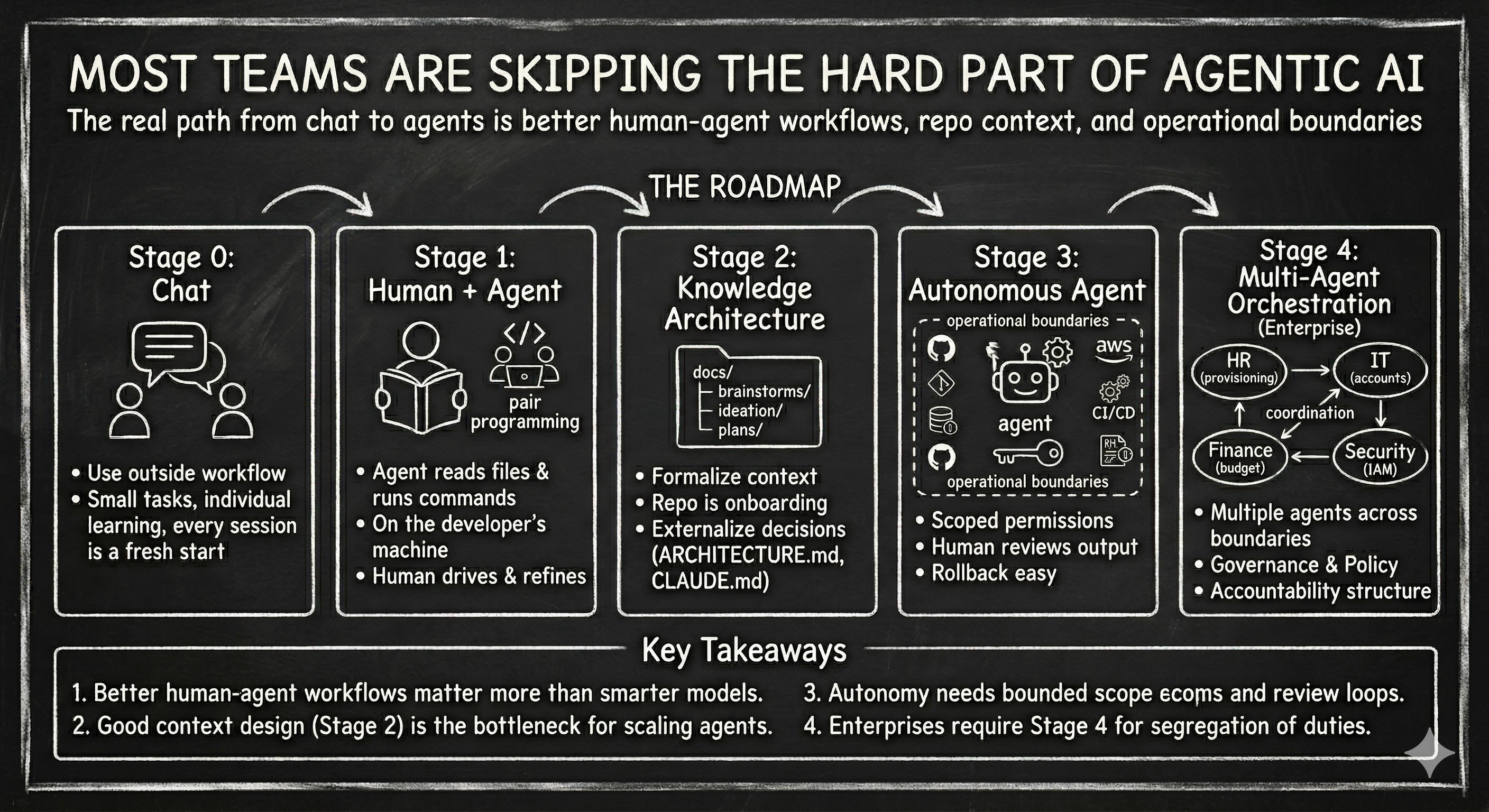

AI agents are coming to every company. Most won't be ready — not because of technology, but because they skipped the groundwork.

Almost every SaaS tool is racing to add AI capabilities. But the market is waking up to something bigger: the era of SaaS tools charging a fortune is ending. Companies can now build what they need at a fraction of the cost.

Chamath put it, the big question is whether any SaaS company's growth will be overtaken by a much cheaper AI-developed solution. The answer increasingly is yes.

"Software is eating the world. Now AI is eating software." — Marc Andreessen

"AI agents will use enterprise software as tools" — Jensen Huang

That means the real capability isn't buying another tool — it's building the internal muscle to work with AI effectively. AI agents are only as good as the skills they run, the context they operate in, and the feedback loops that refine both. Those things are built by humans, stage by stage. There are no shortcuts.

Not every company needs Stage 4. Every company needs Stages 1 and 2.

GenAI vs. Agentic AI

The underlying models are the same. The difference is tool access and autonomy.

GenAI: you give it input, it gives you output. It writes your email, summarizes your doc, generates code. It's powerful. Millions of people get real value from it daily. But it lives in a box. You are the hands and feet. You copy the output, switch to the right tool, and apply it yourself.

An agent has hands and feet. It can act on your environment. It reads your files, runs commands, hits APIs, observes the results, adjusts, and keeps going. You can ask it the same question ("why is this test failing?") but the difference is what happens next. Without an agent, you copy the error, paste it into a chat, get a guess based on the error message alone, then go back and copy more context (the test file, the function, the recent diff) and go back and forth narrowing it down. You're the one assembling the picture. With an agent, it reads the error, checks the git log, looks at what changed recently, reads the diff, and comes back with: "this test is failing because yesterday's PR changed the response schema but didn't update the assertion." It assembled the context itself.

The Stage 0 → 1 jump isn't smarter AI. It's giving AI the ability to act.

The Stages

Stage 0: GenAI Chat

Everyone knows ChatGPT, Claude, Gemini, Grok. They use it for email drafting, summarizing docs, brainstorming, explaining concepts, generating code snippets. This is real, daily value. It's not a toy.

But the human is the integration layer. You copy from the chat, switch to your IDE or terminal or browser, paste, adjust, apply.

Not agentic. But not worthless. This is the foundation of AI literacy that makes everything else possible.

Stage 1: Human + Agent

Every question gathers context. That's where it gets interesting.

An engineer installs Claude Code, Cursor, Copilot, or similar. The agent runs on their machine, uses their credentials, and can act on their environment: read files, run commands, execute code, interact with APIs.

The interaction is a loop, not a one-shot. You ask a question. The agent gathers context to answer it: reads files, checks git history, looks at configs. That answer surfaces something you didn't know, so you ask a follow-up. The agent gathers more. Each question deepens the picture for both of you. By the time you get to "ok do it," the agent has assembled a rich understanding of the problem that neither of you had at the start.

The adoption arc is natural: use common skills first, refine them, then build new ones. Skills are reusable instruction files (a SKILL.md) that teach an agent how to perform a specific task. They follow an open standard that works across Claude Code, Cursor, Gemini CLI, Codex CLI, and other agents. Nobody starts from scratch. You pick up a skill that already exists. You run it. It mostly works. When it doesn't, you fix it for your context and push the improvement.

One example is the Compound Engineering Plugin. You can go watch the video about it here.

The real value emerges when the team dynamic kicks in.

The custom skill feedback loop

- Engineer A creates a new skill that is very specific to your organization. It works for their task in a specific context.

- Engineer B picks it up, runs it against a different context.

- Different context exposes edge cases or opportunities to improve it.

- Engineer B fixes the bug or improves it and pushes the change.

- Engineer C refines the output format for their workflow.

- Eventually, someone encounters a gap. No skill exists for it. They build a new one.

- The cycle repeats: use → refine → build.

The craft feedback loop

It's not just the skills that get refined. The team gets better at operating agents. One engineer uses /ce:plan to scope work. A teammate discovers /ce:deepen-plan works better for complex features. Someone else figures out that reviewing a plan across multiple models before writing code catches design-level blind spots. CCL. This knowledge (when to use which command, which plugin, which workflow) compounds across the team and keeps evolving as the tools evolve.

The agent is the execution layer. Humans are the quality layer. Skills get hardened and operating knowledge deepens through distributed use, not through formal training programs. Every teammate who works with agents in a different context is expanding the team's collective understanding of how to use them effectively.

The access model is simple:

👤 Engineer on their machine

↕

🤖 Agent (Claude Code / Cursor / Copilot etc)

↕

Engineer's own credentials — agent sees what they see

Skills shared via git repo — no new infrastructure requiredYou don't need to mandate this. If the tooling is right and skills are shareable, cross-pollination happens organically. The manager's job isn't to build a program. It's to not block it.

Stage 2: Knowledge Architecture

The shift from organic sharing to intentional system.

The team formalizes how context gets created, structured, and maintained, so that both humans and agents can operate with full context from day one.

This is not "writing documentation." This is externalizing institutional knowledge: the why behind every decision, the dependencies between systems, the things that will break if you change something without understanding the history. This knowledge lives in people's heads. Stage 2 gets it into the repo, structured and discoverable, so it works identically for a new hire and a new agent session.

What a Stage 2 repo looks like

This looks like a lot of documentation. The good part about working with AI is you're not hand-writing all of this. You use AI to generate it. But you carefully review it. Your job is to catch the mistakes AI makes and help it not make the same mistake again. That's the same feedback loop from Stage 1 applied to knowledge.

docs/

├── brainstorms/ ← Raw thinking. "We might need X. Here's what requirements could look like."

│ ├── 2026-03-18-ai-hand-coach-requirements.md

│ └── 2026-03-23-pwa-install-guide-requirements.md

├── ideation/ ← Shaped thinking. Structured enough to evaluate, not yet committed.

│ └── 2026-03-27-security-posture-ideation.md

├── plans/ ← Committed decisions. Structured frontmatter, linked to PRs.

│ └── 2026-03-28-001-fix-idor-hand-access-control-plan.md

├── ARCHITECTURE.md ← How the system fits together

└── DEPLOYMENT_ARCHITECTURE.md

CLAUDE.md ← Agent entry point. Rules of engagement.CLAUDE.md is a markdown file you add to your project root that the agent reads at the start of every session. It sets coding standards, architecture decisions, preferred libraries, review checklists. Everything the agent needs to know to contribute effectively without asking basic questions. Think of it as an onboarding document written for an AI engineer joining your team.

Plan docs have structured context: scope, date, findings, change history:

# Security Audit Findings

**Date:** 2026-02-21

**Scope:** Full repository

**Total Findings:** 39

**Last Updated:** 2026-02-21 (C1 accepted risk, H1→M22 downgrade, Appendix A corrections, M21 added)An agent dropping into this repo for the first time can:

- Read

CLAUDE.md→ understands the rules of engagement - Read

ARCHITECTURE.md→ understands how the system fits together - Browse

docs/plans/→ sees the history of decisions and their rationale - Check

brainstorms/→ understands what's being considered but not committed

The repo is the onboarding. No two-hour walkthrough needed.

The self-reinforcing loop

Plan doc → PR → CLAUDE.md references the plans folder → next agent reads the plans → understands patterns → produces work following the same conventions → creates its own plan doc. Every plan doc makes the next agent interaction better.

What changes at Stage 2 vs Stage 1

- Stage 1: good engineers write decent docs because they're good engineers

- Stage 2: the team agrees on a structure (the frontmatter schema, the folder conventions, the naming patterns) and that agreement is what makes it compoundable

Context contracts: team agreements about how context is created and maintained so it's consumable by both humans and agents. The team says: "this is how we explain work in this repo, for everyone."

Multi-model review at the plan stage

The plan docs aren't just for humans and agents. They're the artifact you run through multiple frontier models for review before you write a single line of code. Every model has biases shaped by how it was built. Cross-checking a plan across Claude, Codex, and Gemini catches blind spots that any single model would miss. The knowledge architecture enables this: without structured plan docs, there's nothing to review.

Stage 3: Autonomous Agent

The agent operates off the machine. Human supervises.

The agent gets deployed on its own infrastructure: platforms like OpenClaw, A2A framework, or custom setups. It's no longer tethered to a developer's laptop session.

It has access to the same tools as Stage 1 (GitHub, AWS, CI/CD, monitoring, error logs, etc) but no human is actively driving. It picks up tasks, executes against the codebase, creates PRs, flags issues, connects errors to root causes.

This only works because of Stages 1 and 2:

- Stage 1 gave it battle-tested skills refined through human feedback loops

- Stage 2 gave it a self-describing codebase where the context is discoverable

What this looks like in practice

We run this today. As of March 2026, our production app ships about 30 deploys a month with a two-person team and two AI agents. The humans push to main. The agents open PRs. Every agent PR gets human review before merge. Average cycle time from PR open to production: 13.5 minutes.

Why starting with the tool fails

Most think the tool is the solution. They're buying an autonomous agent when the real work is building the skills, craft, and context that make an agent effective.

They point it at a repo with no CLAUDE.md, no architecture docs, no plan history, no structured context. The agent has tool access but zero understanding. It's like hiring a contractor, handing them repo access, and saying "figure it out." Compare that to handing them a repo where every decision is documented and the context is self-describing.

What changes at the access level

🤖 Agent on its own infrastructure

↕

🔧 GitHub · AWS · CI/CD · Monitoring · Slack

↕

Agent's own credentials — scoped to what it needs (least privilege)

Human reviews output — PRs, reports, escalationsFor smaller orgs, this may be the endgame. A well-scoped agent with good permissions, running autonomously, human reviewing output. Not every company needs multi-agent orchestration.

Stage 4: Multi-Agent Orchestration (Enterprise)

Multiple agents coordinate across organizational boundaries.

This stage exists because enterprise workflows cross permission boundaries that shouldn't be collapsed.

Why does HR have different access than Infrastructure? Why can't Finance modify production? Because segregation of duties exists for compliance, security, and accountability reasons. The same logic applies to agents.

When a workflow requires crossing permission boundaries where no single identity should have full access, that's Stage 4.

New hire onboarding:

- HR agent triggers provisioning (has access to employee PII)

- IT agent creates accounts and sets up machines (has access to identity systems)

- Finance agent allocates budget and approves spend (has access to financial systems)

- Security agent sets access policies based on role (has access to IAM)

Four domains. Four permission scopes. Four compliance boundaries. A single agent with all those permissions is a security incident waiting to happen.

Multi-agent isn't "more agents = more advanced." It's that the organizational structure demands it.

What's required: service identities for each agent scoped to their domain, an agent registry, cross-agent communication protocols (A2A protocol), full audit trail on every handoff, governance policies for what decisions require human approval, and an accountability model for autonomous decisions.

Who needs Stage 4: Enterprises with segregation of duties. Companies large enough that cross-team workflows involve different security domains. Not a 20-person startup.

The Skip Problem

Most start with the tool, not the team. The vendor sells the agent. Nobody sells the groundwork that makes the agent useful.

Without Stages 1 and 2, agents operate blind.

No battle-tested skills, no operating craft, no externalized institutional knowledge. The team hasn't learned how to direct agents or developed the judgment for which tools and workflows work in which situations. No architecture docs, no decision history, no dependency mapping between components, systems, and infrastructure. The agent doesn't know that changing the auth service affects the billing pipeline, or that the queue config is tied to three downstream consumers. It doesn't know why something was built a certain way at a certain time, or what will break where.

The result: agents make changes that look correct but silently break things downstream. Changes work in isolation but violate patterns and break dependencies nobody told the agent existed. The codebase degrades in ways that compound over time.

Both feedback loops are non-skippable. At Stage 1, skills get hardened and the team builds operating craft. At Stage 2, the team externalizes institutional knowledge and structures the codebase so agents can discover context. At Stage 3, the deployed agent runs battle-tested skills against a self-describing codebase, configured by humans who know how to direct agents effectively. Without that history, Stage 3 is just an expensive experiment.

Foundation Layer

1. Enterprise Agreement with Model Providers

Data isn't used for training. The legal side is covered. BAAs, DPAs, data residency as required. As of March 2026, the frontier models are Claude Opus 4.6, Codex GPT-5.4, and Gemini 3.1 Pro. You should have access to multiple, not just one.

2. Use Multiple Models

Every model has biases shaped by how it was built. Cross-check plans and approaches across multiple frontier models before you write code. The cheapest bug to fix is the one you catch at the design stage. The plan docs from Stage 2 are the artifact you run through multiple models for review.

3. Team Agreement on AI Use

What goes into prompts, what doesn't. How AI-generated output gets reviewed. This doesn't need to be heavy. It needs to exist.

Where the Human Sits

| Stage | Human Role | Agent Role | Relationship |

|---|---|---|---|

| Stage 0 | Does the work | Answers questions | You ask, it talks |

| Stage 1 | Drives and refines | Executes on the machine | Pair programming |

| Stage 2 | Builds the system | Operates within the system | Way-of-working shift |

| Stage 3 | Reviews output | Operates independently | Supervision |

| Stage 4 | Sets governance | Agents coordinate | Policy-driven |

Not every company needs Stage 4. Every company needs Stages 1 and 2.

Sanjeev Nithyanandam is the founder of Accelra Technologies, an agentic engineering consultancy in Vancouver. Follow the journey at Ship With Sanjeev.